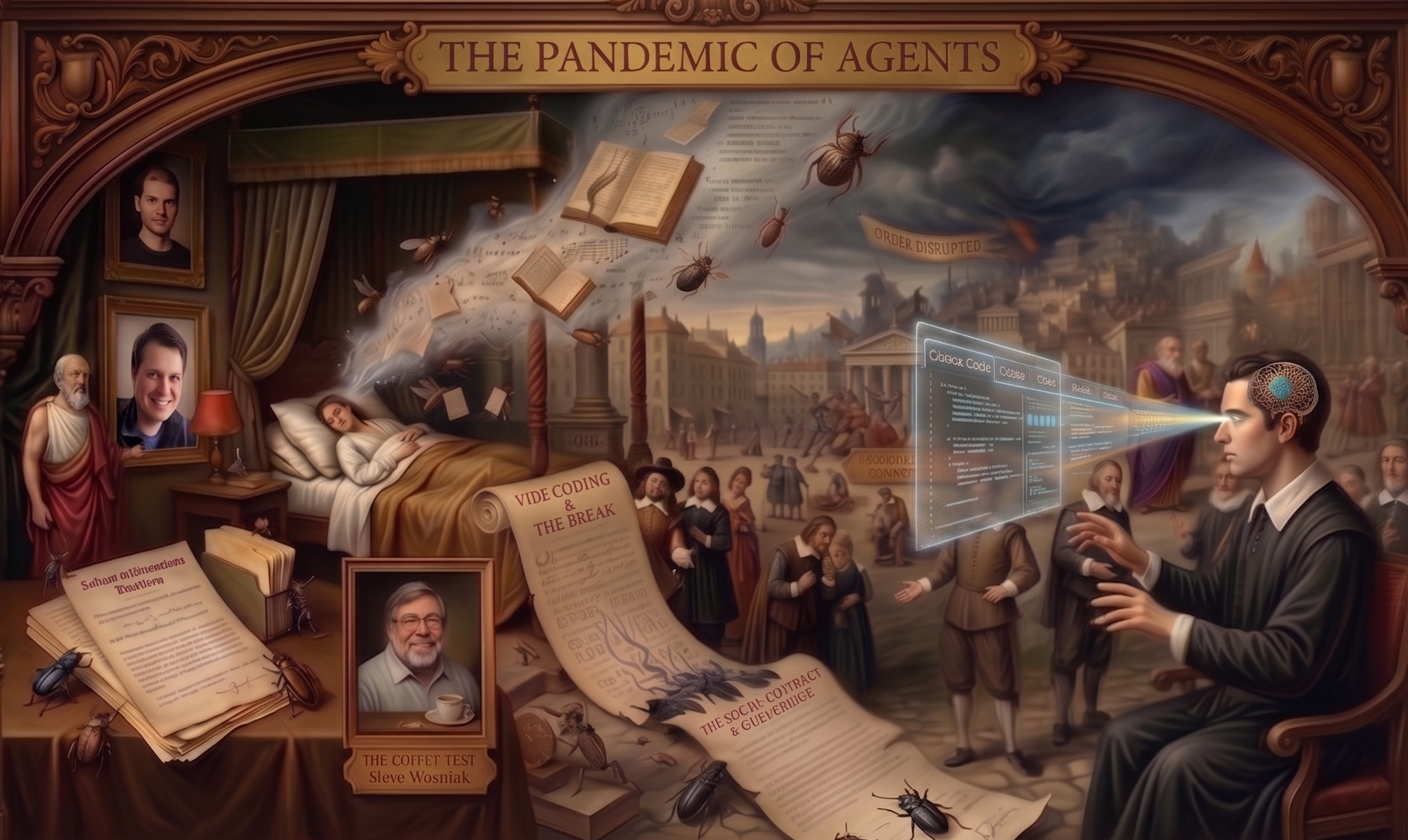

I woke up today with this weird dream, just annotating my

dreamsandhalluciantionsfor a future agents to consume.

People often compare AI with industrial revolutions. I think that is a huge underestimate of what AI will bring to the table. On November 30, 2022, when ChatGPT was released, I told everyone that this was an AGI-enabling distribution. What I mean by AGI-enabling is that the intelligence is probably ready, but human understanding of intelligence (a.k.a. mechanistic interpretability) and systems engineering is lacking. While many people still debate whether we are at this turning point or not, if you look at the human-written definitions on Wikipedia, you’ll see a lot of tests that, undoubtedly, we can imagine the current generation of systems will solve. I’ve always liked the Coffee test in SFT era. because every time we got a new model, I would run the query, How to make a cup of coffee?, and it would reply in a different way. I’d see the test as one for a deaf and blind system, and as a measurement of intelligence purely from the system itself.

Fast-forward three years, and now I think I should write it down again.

The current harness systems are an ASI-enabling distribution. All the Millennium Problems will be solved in five years. The next Millennium Problem is AI safety, and potentially NOT an AI-complete problem.

Before I move to my scary random thoughts, let me just focus on the main part of this argument that AI safety is NOT an AI-complete problem. It means, capability may race ahead very quickly, and major scientific discoveries may solve silently. But I think (or just want to believe 🤞), the safety is a different tractable problem and a GPT_{n-1} should be able to align (and consistentyly solve the safety problems of) GPT_{n}. If you don’t believe in weak to strong generalization, different story, but personally I don’t see a world where humanity survibes without GPT_{n-1}. That being said like Dario said, I believe someday Chris Olah and also Ilya Sutskever deservely will get a noble prize for it (just realized this is an extremely random non-contextual argument).

I’m not here to convince anyone of anything. Just writing stuff that I believe.

But moving back to my sad, joyful life, what does this mean for an ASI-enabling distribution existing in a world where people still think we are far from AGI? When I go outside and talk with people in different jobs, I cannot hide the thought in my mind: Ah! The current intelligence abstraction already covers this job. It is only a matter of time before some weekend-warrior, cocky college — or even school — dropout raises VC money to automate these jobs with the Claude/OpenAI API. I do not see a society that will survive without proper AI governance, and I am sure it will happen. But what I fear is the Bloodbath. I would assume a slight societal shift in capitalism would do the trick, but on the way there, governance will have to figure out a lot of human alignment with Capitalism. Slow governance is itself a safety process for society, since we should not rush into decisions, but what should we expect in the presence of an ASI-enabling distribution? I have not found any societal correction or change in governance that did not cost too much blood and sweat (1873, 1929, 1930, 1987, etc.).

The real danger may not be that intelligence outruns control, but that capability outruns governance.

Now let’s talk about one of the fundamental issues: anthropomorphization. This is not a silly conceptual issue anymore. Think about it: people build something using AI tools, and when it fails, they say, Ah! AI made the mistake, I didn't. For me, this is anthropomorphizing, giving AI a persona rather than treating it as a tool. The biggest indicators of ASI right now are the coding agents, especially the harness systems. You’ll hear people saying, Ah! I wrote the code with claude code, and it made the mistake. I had a lot of debates about this with many different people. For someone who always loved to debate, nowadays I give up too easily, since everything will be replaced by AI and we are going into a great depression anyway. Ow! Now I’m becoming an AI doomer. I do not have a catchy story to prove that I’m not, LMAO!

Another core high-stakes issue we are going to face is the trolly problem

. I personally believe that I donated all the trolley problem prompts during the ChatGPT research release. The trolley problem becomes easy for a chat system, however, it remains extremely hard when you break the neutral phase and enable agentic application. An agent/agentic system can now has to be utilitarian or deontological. Even if the agent cites entire Michael Sandel monologues every time a return-confirmation prompt needs to be reviewed by a human, things will break for sure, not even arguing about the correctness of sleeper agents.

Now let me come back to my dream. I was sleeping and woke up from a dream that I was vibe coding while dreaming. Scary, no? Then I jokingly thought about a Neuralink patient playing CS2. What if a Neuralink patient starts vibe coding? That is easier, no?

As we move ahead in the new era, the straw hat groups will be defined by more fundamental traits: determination, hard work, fearlessness, stubbornness, unbreakability, and, more importantly,

kindness. And yes, you’ll LIVE.

@article{bari2026chomskycurriculum,

title = {The pandemic of Agents},

author = {Bari, M Saiful},

year = {2026},

month = {April},

url = {https://sbmaruf.github.io/post/pandemic-agent/}

}